Rank Tests & Permutation Inference

Distribution-free hypothesis testing through ranks and permutations — Wilcoxon, Mann-Whitney, Kruskal-Wallis, Pitman ARE, Hodges-Lehmann, and randomization inference for A/B testing

From the Sign Test to Wilcoxon Signed-Rank

The simplest hypothesis test you can run on paired data — pre-treatment vs post-treatment, twin-pair A vs twin-pair B, before-and-after readings on the same subject — is the sign test: count how many differences came out positive and ask if that count is consistent with random chance. The test makes no distributional assumption beyond continuity and a symmetry around zero, so it works under conditions where Gaussian-based tests would fail. But it also throws away the magnitudes — a difference of counts the same as a difference of . Wilcoxon’s signed-rank statistic recovers the magnitude information through ranks while keeping the distribution-free property. Both tests turn out to be special cases of the more general permutation framework we develop in §2.

Definition 1 (Sign-Test Statistic).

For paired differences , the sign-test statistic is the count of positive differences,

where is the indicator of event .

Theorem 1 (Sign-Test Null Distribution).

Suppose are independent and each is continuously distributed and symmetric about . Then under the common median is ,

Proof.

By continuity, , so is well-defined a.s. Symmetry of about gives . Independence of the then makes iid Bernoulli(1/2), and their sum is Binomial.

∎Definition 2 (Wilcoxon Signed-Rank Statistic).

With paired differences having no ties in absolute value, let denote the rank of in the absolute-value sample (so ). The Wilcoxon signed-rank statistic is the sum of ranks at positive differences,

Theorem 2 (Signed-Rank Null Distribution).

Under (paired differences symmetric about , no ties in ), is distributed as with . Consequently,

and the exact null distribution is symmetric about .

Proof.

Condition on the absolute ranks (which form a permutation of ). Symmetry of each about gives , and independence of the gives that the signs are iid uniform on conditional on . Define , so conditionally and unconditionally. Then

because is again iid Bernoulli for any permutation (which here maps a fixed index to its rank position).

The moments follow termwise. Each has mean and variance , and the are independent, so

For symmetry: the sign-flip is a bijection on that maps to . Hence the null distribution is symmetric about its mean .

∎A concrete example illustrates the workflow. Take paired differences from a treatment study,

Five of the eight are positive, so — the sign-test two-sided -value from is , well above . The Wilcoxon signed-rank statistic uses the absolute ranks and sums the ranks at positive differences: . Enumerating all sign vectors gives a discrete null with mean and variance , against which has two-sided . Both tests agree the effect is not significant at , but the framework — replace data with rank-and-sign information, derive the null from the symmetry group of sign flips, get an exact -value from enumeration — is the structure we generalize next.

At n = 8 the Wilcoxon null is centred at μ = n(n+1)/4 = 18 with variance n(n+1)(2n+1)/24 = 51 (Theorem 2). Drag D₁ across zero to flip its sign and watch which bars enter the rejection region.

The Permutation Principle and Exchangeability

Theorem 2’s null distribution didn’t really need symmetric continuous differences — it needed something subtler. The joint distribution of had to be invariant under sign-flips. That kind of invariance generalises to a property called exchangeability, which is the engine driving every test in this topic and (it turns out) conformal prediction as well.

Definition 3 (Exchangeability).

A random vector is exchangeable if for every permutation of ,

Independence and identical distribution (iid) implies exchangeability, but not conversely. Under exchangeability with no ties, the rank vector is uniformly distributed on the permutations of .

Theorem 3 (Permutation-Test Exactness).

Let be any test statistic and a finite group of transformations such that under the joint distribution of is invariant under every — i.e., . Define the permutation -value

Then under , for every , with equality at values that are integer multiples of (after random tie-breaking).

Proof.

Under , for any fixed , the random vector has the same distribution as . So the multiset of statistic values

satisfies as a multiset, since

where ranges over as does (group property). The observed statistic corresponds to and is one element of . Under , is distributionally exchangeable with each of the values ; this is the consequence of applied to each . Therefore the rank of within the multiset — call it — is uniformly distributed on (with random tie-breaking).

The permutation -value satisfies

Since is uniform on , is uniform on . Therefore

with equality whenever is a multiple of .

∎Remark (Permutation vs Randomization Inference).

The permutation principle is sometimes called the randomization principle, and the two terms are routinely conflated. There is a real distinction. In the population-sampling context (data drawn from an infinite population), Theorem 3’s invariance assumption is exchangeability of the sample under — a property of the joint distribution. In the finite-population or experimental context (Theorem 12 below; §8), the invariance is assured by the experimenter’s random treatment assignment — a property of the physical randomization mechanism. The two are mathematically identical (both are instances of Theorem 3 with different choices of ), but their justifications differ. In observational studies, exchangeability is often a much stronger assumption than experimenters realise; in randomized experiments, it is guaranteed by design. We pick this back up explicitly in §8.

The proof is short and statistic-free. That’s the power of the permutation principle: validity comes from the symmetry of the null, not from any asymptotic property of . Use a -statistic, a mean difference, a max correlation, anything — the permutation test wraps it in an exact level- envelope.

The permutation framework is statistic-agnostic — same procedure, three interchangeable test statistics, validity from Theorem 3 in every case. Switch the group distribution to log-normal or shrink Δ to feel the power profile shift.

Wilcoxon Rank-Sum and Mann-Whitney

The two-sample setting moves from paired data to two independent groups: observations from one population and from another, with pooled. Test scores from two teaching methods, activation latencies from two neural regions, conversion times for two checkout flows. The null is that the populations have the same continuous distribution; the alternative is that one is stochastically larger than the other. Pool the samples, rank them from to , and take the rank-sum of the smaller group — that’s the Wilcoxon rank-sum statistic. Mann-Whitney’s is its combinatorial twin, counting pairs in which comes out smaller. They contain the same information (Theorem 4), and both inherit exact null moments from the exchangeability of pooled ranks (Theorem 5).

Definition 4 (Wilcoxon Rank-Sum Statistic).

With from one population and from another, pool all observations and rank them . Let denote the rank of in the pooled sample. The Wilcoxon rank-sum statistic is

Definition 5 (Mann-Whitney $U$ Statistic).

With pooled samples as above (and continuous data, so ties have probability zero), the Mann-Whitney statistic is the count of pairs in which is smaller:

The ratio is an unbiased estimator of when and are independent draws from the two populations.

Theorem 4 (Equivalence of $W$ and $U$).

Because and are linearly related, they yield identical tests; the choice between them is a matter of bookkeeping.

Proof.

Sort the -sample so . The rank of in the pooled sample equals (its rank within ) plus the number of -values strictly less than it:

Sum over :

Each pair contributes to exactly one of the events or (continuity rules out ties), so

giving .

∎Theorem 5 (Null Moments of $U$).

Under (the pooled sample is exchangeable, equivalent to continuous),

For , the normal approximation is excellent; for smaller samples, enumerate the rank assignments exactly.

Proof.

Write with . Under every pair is exchangeable, so by symmetry. Therefore .

For the variance,

Variance term. Each is Bernoulli, so . The diagonal contribution is .

Covariance terms split by overlap pattern:

(a) Same , different (, ). Three exchangeable continuous random variables have all orderings equally likely, so

giving .

(b) Different , same (, ). Symmetric to (a): .

(c) All distinct (, ). and are functions of disjoint independent pairs, so they are independent and .

Counting the pairs. Same- ordered pairs: choices for the shared , ordered choices for the -pair, total . Same- pairs by symmetry: . Combining,

∎Remark (scipy's Convention for $U$).

scipy.stats.mannwhitneyu(X, Y) returns for the first argument, counting — the complement of our Definition 5. The two conventions satisfy , encode the same information, and produce identical two-sided -values; only the reported statistic differs. Practitioners who run mannwhitneyu(X, Y) and then look up “expected ” sometimes lose an hour to this. The fix is to pass the arguments in the order matching whichever convention you have in mind.

A worked example: and . Pooled ranks give (the sum of -ranks ) and (the count of pairs with ). Theorem 5’s null moments give and . The two-sided exact -value (scipy.stats.mannwhitneyu) is — consistent with no shift between the two populations. The interactive PermutationDistributionExplorer below lets you switch the underlying parametric families (normal, log-normal, exponential) and verify that ‘s null moments hold across all of them — that’s the distribution-free promise.

The permutation framework is statistic-agnostic — same procedure, three interchangeable test statistics, validity from Theorem 3 in every case. Switch the group distribution to log-normal or shrink Δ to feel the power profile shift.

Kruskal-Wallis: The -Sample Extension

When there are groups instead of — three teaching methods, four drug doses, five regional cohorts — the natural extension is the Kruskal-Wallis test. It is the rank analog of one-way ANOVA’s -test: pool, rank, and look at the between-group dispersion of mean ranks. The shape of the test mirrors ANOVA exactly, with the rank substitution buying distribution-freeness without giving up the chi-squared limit.

Definition 6 (Kruskal-Wallis Statistic).

Suppose we have groups of sizes with total . Pool all observations and rank them ; let denote the rank of the -th observation in group , and let

be the mean rank in group . The Kruskal-Wallis statistic is

The grand mean rank is — the average of the integers — and the prefactor normalises by the variance of the discrete uniform on , putting on a scale comparable to a chi-squared.

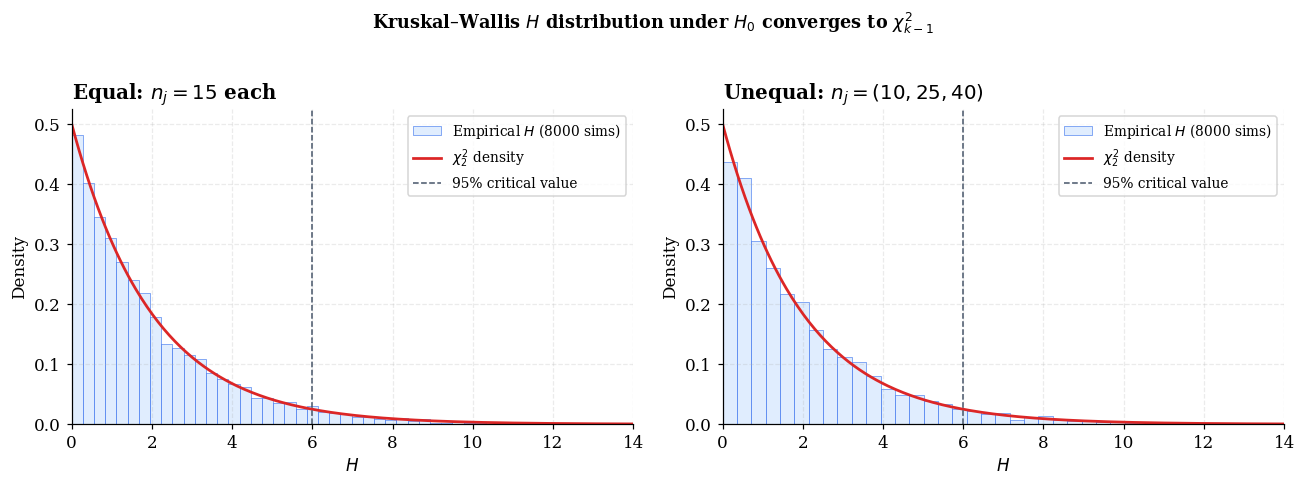

Theorem 6 (Kruskal-Wallis Asymptotic Null).

Under (all groups have the same continuous distribution), as all with ,

Proof.

Define standardised mean-rank deviations

Then . We compute the asymptotic distribution of .

Under the rank vector is a uniformly random permutation of . A single rank has mean and variance . The mean rank in group averages ranks sampled without replacement from , so the finite-population variance formula gives

where is the finite-population correction. Therefore

For the off-diagonal structure: the centred ranks sum to zero, so the standardised group sums satisfy . Rescaling, the vector with lies on the hyperplane , so its covariance is singular along the all-ones direction. After the further rescaling ,

The diagonal entries match the marginal-variance calculation; the off-diagonal entries match the constraint (rescaled from const).

The multivariate finite-population CLT (Hájek 1960; van der Vaart 1998 §13.2) gives

as all with .

Finally, for with idempotent of rank , the quadratic form is -distributed. Here is idempotent — since — and has rank (the all-ones direction is in the null space). Therefore

∎The translation from to is essentially “replace data values with their pooled ranks.” A worked example with three groups of each, drawn under from a common normal, gives mean ranks and — a -value of , well within the null. Empirical type-I error at tracks the nominal levels closely for both balanced () and unbalanced designs.

Asymptotic Relative Efficiency (Pitman)

The rank tests of §§1, 3, 4 are valid under nearly any continuous distribution — that’s the distribution-free promise. A natural concern is that we might be paying for that generality with reduced power: under conditions where the optimal parametric test exists (the -test under normality, say), are we losing meaningful sensitivity by using ranks? Pitman’s framework of asymptotic relative efficiency answers the question precisely. ARE compares the sample sizes two tests need to achieve equal power against the same local alternative; under contiguous alternatives , the ratio depends only on the noise density’s variance and an integral . The result is the celebrated formula in Theorem 8 below — and the surprise is just how forgiving it is to give up the parametric optimum.

Theorem 7 (U-statistic Representation of $W^+$).

For continuous paired differences with no ties in ,

That is, counts the number of Walsh averages that exceed zero (with the convention ). This bridges to the Walsh-average construction we use for Hodges-Lehmann in §6.

Proof.

Split the pairs by the diagonal:

The diagonal term is .

For with continuous data, exactly one of is larger; since the larger absolute value dominates the sum, the sign of matches the sign of . So for each and each index with , the pair contributes . The count of indices with is (the rank of ), so the count of contributing is (subtracting the term ). Hence

Combining:

∎Theorem 8 (Pitman ARE Formula).

Under the contiguous local alternative with paired differences , where has density symmetric around with finite variance and , the Pitman asymptotic relative efficiency of the Wilcoxon signed-rank test relative to the one-sample -test is

with drift coefficients and where . At standard symmetric densities,

Proof.

Drift of the -test. . Under we have , and in probability, so . The drift coefficient is .

Drift of Wilcoxon. Apply the U-statistic representation of from Theorem 7. Decompose into diagonal and off-diagonal contributions:

(a) Diagonal term. Write with symmetric. Then , where is the CDF of . Taylor-expand around using and :

so .

(b) Off-diagonal term. , where has density (convolution), symmetric around . The density at is

using the symmetry of . The same Taylor expansion applied to the CDF of gives

Combining and subtracting the null mean :

With :

where the off-diagonal U-statistic part of order dominates the diagonal of order .

The null variance of is , so the standardisation divisor is . The standardised statistic

satisfies . By the U-statistic CLT (Hoeffding 1948) and contiguity of with the null,

ARE. The Pitman ARE compares the squared drift coefficients,

∎A direct numerical sanity check on the figure under normality: at , , normal noise, Monte Carlo replications, the Wilcoxon empirical power is vs the -test’s — a ratio of , just above the asymptotic . The asymptotic ARE is the limit, and finite- ratios bracket it.

Try Student-t with df = 5 (ARE ≈ 1.24) or contaminated normal with ε = 0.1 (ARE rises sharply with the contamination weight). The Hodges-Lehmann minimum is the parabolic density at which ARE(W, T) bottoms out at 0.864.

Remark (The Hodges-Lehmann 0.864 Floor).

A celebrated result of Hodges & Lehmann (1956) establishes that for any density symmetric with finite variance and a bounded twice-differentiable form,

Wilcoxon never loses more than ~14% asymptotic efficiency relative to the -test, regardless of the underlying density, and can gain unboundedly under heavy tails. The minimum is achieved at the parabolic density on . The proof is a calculus-of-variations problem — minimise subject to symmetry and unit variance — and is reproduced in Hodges & Lehmann (1956) and Lehmann (1975, §4.2). The bound is the rare statistical bargain: distribution-freeness with a worst-case efficiency cost smaller than most practitioners’ rounding error in sample-size calculations.

The Hodges-Lehmann Estimator

The Wilcoxon test gives a -value but not a point estimate of the location parameter — there’s no obvious “Wilcoxon estimator of ” until you invert the test. Hodges-Lehmann’s 1963 construction does exactly that: take the median of all pairwise averages of paired differences. The result is a point estimator that sits between the sample mean and the sample median in robustness, with the same asymptotic relative efficiency to the mean that the Wilcoxon test has to the -test (Theorem 11). And because the construction is test-inversion, it comes with an exact distribution-free confidence interval (Theorem 10).

A worked example with an outlier makes the case. Take — the is a single dominant value (think: a logging error, a server crash duration, a one-time mega-purchase). The sample mean is , dragged up by the outlier. The sample median is , ignoring the outlier completely. Hodges-Lehmann gives — between the mean and median, robust to the outlier without throwing it out. The HL confidence interval is , exact under symmetry of the underlying distribution.

Definition 7 (Walsh Averages and One-Sample Hodges-Lehmann Estimator).

For paired data , the Walsh averages are the pairwise means

There are of them — diagonal entries plus off-diagonal entries. The one-sample Hodges-Lehmann estimator of the population location parameter is

Definition 8 (Two-Sample Hodges-Lehmann Estimator).

For two samples and drawn from distributions differing by a location shift , the two-sample Hodges-Lehmann estimator of is the median of all cross-sample differences:

Theorem 9 (Hodges-Lehmann as Test-Inversion of Wilcoxon).

is the unique value at which the Wilcoxon signed-rank statistic applied to the shifted data takes its null mean . Equivalently, .

Proof.

Apply the U-statistic representation of (Theorem 7) to the shifted data :

As increases from to , the count decreases from to , jumping by each time crosses a Walsh average. It equals the null mean exactly when is positioned with Walsh averages above it and below it — i.e., at the median of the discrete set .

∎At the notebook's n = 8 outlier example, HL = 1.20 sits between mean (1.85) and median (1.15). The 95% HL CI is [0.30, 4.45] — exact under the Wilcoxon symmetry assumption (Theorem 10). Inject another outlier and watch the right panel: mean RMSE rockets, median is steady, HL stays close behind median.

Theorem 10 (Distribution-Free CI from Walsh-Average Ordering).

Let be the sorted Walsh averages with , and let be the integer satisfying — the lower critical value of the discrete null distribution of . Then

is a confidence interval for the location parameter , with coverage exact at levels achievable by the Wilcoxon discrete null and conservative otherwise.

Proof.

By Theorem 9 (and its symmetric twin for the upper tail), the count drops by at each Walsh-average crossing as increases. A two-sided level- test fails to reject when

i.e., the count of Walsh averages strictly greater than is strictly between and . This holds iff lies strictly between the -th and -th sorted Walsh averages — closing the interval as

By the duality of test inversion, the probability that this interval covers the true equals the probability that the level- test fails to reject the truth, which is at least by the exactness of the Wilcoxon test under the symmetry assumption.

∎Theorem 11 (Hodges-Lehmann Asymptotic Distribution and ARE).

Under the regularity conditions of Theorem 8 ( symmetric with finite variance, density continuous and bounded, ),

Consequently:

The HL estimator inherits the same efficiency advantages as the Wilcoxon test (Theorem 8), with a celebrated asymptotic-efficiency floor relative to the sample mean (Hodges & Lehmann 1956).

Proof.

The first claim follows from Theorem 8 and the test-inversion structure of Theorem 9. The Wilcoxon statistic standardised under satisfies with . By Theorem 9, is the value of at which equals zero — i.e., the zero of the standardised Wilcoxon as a function of the location parameter.

A delta-method argument inverts the asymptotic-mean function around its zero. The mean function shifts linearly in with slope in standardised units, equivalent to slope in the un-standardised sum; the noise scale is . The implicit-function calculation yields

The full Bahadur-representation foundation for this inversion (uniform convergence of the rank-statistic process) is in Lehmann (1975, §4.3) and van der Vaart (1998, §13).

ARE comparisons. The sample mean has asymptotic variance , so

The sample median has asymptotic variance , so

∎Remark (When the Sample Median Beats HL (Laplace = MLE)).

The Hodges-Lehmann estimator is not a universal optimum — it loses to the sample median when the data are Laplace-distributed. Under the Laplace density , the maximum-likelihood estimator of the location parameter is the sample median (because the Laplace log-likelihood is the negative loss). Plugging in the Laplace values , :

The median achieves asymptotic variance , while HL achieves — a 33% inflation. So under Laplace, use the median, not HL. The general moral: HL is a robust compromise across density shapes, not the best estimator at any single one. When the parametric form is known, the MLE is what beats it.

Permutation Tests with Non-Rank Statistics

The permutation principle from §2 doesn’t require rank statistics. Any test statistic permits a permutation test, and dropping the rank structure can buy back the small power loss that ranks impose under nice distributions. The trade-off is the standard one: parametric efficiency (when assumptions hold) vs robustness to outliers and tails (when they don’t).

The canonical example is the permutation -test: take the same two-sample setting from §3 with the Welch -statistic

but instead of comparing to a reference distribution (which requires the Welch-Satterthwaite approximation), enumerate or sample the label-swap permutations and compute the empirical permutation distribution. Theorem 3 gives exact level- control under the exchangeability null, regardless of how non-normal the data are. A worked example: two samples of size from a distribution shifted by . The classical Welch -test gives (off because is heavy-tailed); the permutation gives — close, but the permutation version is the one that’s exactly right.

Remark (Raw vs Ranks — A Power Trade-Off, Not a Validity Trade-Off).

Both the permutation -test and Mann-Whitney are exact under exchangeability — they differ only in the choice of statistic. The choice is a power decision, not a validity one:

- Raw permutation (mean difference, Welch , etc.) is more powerful when the parametric model is roughly right. Asymptotically, the permutation and the classical share the same drift coefficient .

- Rank permutation (Wilcoxon rank-sum, Mann-Whitney ) is more powerful when tails are heavy or outliers are present. Drift coefficient , which dominates exactly when .

- Random permutation (sampled rather than enumerated): when is too large for full enumeration, sample random permutations. The Phipson-Smyth -value

is conservative; the “+1”s adjust for the observed value being one realisation, and the construction guarantees regardless of .

The key point: the permutation framework is the umbrella, and rank tests are just one corner of it. When you encounter a clever test statistic in a paper, the question to ask is not “does it have a known asymptotic distribution?” — it’s “is the null exchangeable?” If the answer is yes, you have a finite-sample exact test for free.

The permutation framework is statistic-agnostic — same procedure, three interchangeable test statistics, validity from Theorem 3 in every case. Switch the group distribution to log-normal or shrink Δ to feel the power profile shift.

A/B Testing as Randomization Inference

Online controlled experiments — A/B tests in tech, RCTs in medicine and policy — are the natural home of permutation inference, but with a twist: they are really randomization tests in the Fisher sense (per Remark 1), not permutation tests in the population-sampling sense. The validity claim is different and considerably stronger: the experimenter’s coin flip provides exactness regardless of the metric’s distribution.

The setup. A platform randomly assigns each of users to control (A) or treatment (B), measures an outcome metric (revenue, session length, conversion rate, ad clicks per impression) per user, and asks whether the treatment shifted the metric. Standard practice runs Welch’s -test on the means and reports a -value. The trouble: heavy-tailed metrics (revenue is famously log-normal-ish) can break the Welch-Satterthwaite reference distribution, producing tests with the wrong size. Randomization-inference-via-permutation gives an exactly correct test even when Welch is wrong — and with one extra ingredient (a pre-period covariate) the CUPED adjustment can dramatically reduce variance without sacrificing exactness.

Definition 9 (Fisher's Sharp Null and the Randomization Distribution).

In an experiment, each unit has potential outcomes under control and treatment respectively. Treatment is assigned by a known random mechanism with , and the observed outcome is . Fisher’s sharp null is

Under , is constant in , so swapping to any other assignment drawn from the same randomization mechanism gives the same observed but a different test statistic . The randomization distribution of is the distribution of where is drawn from the assignment mechanism.

Theorem 12 (Randomization-Test Exactness Under Fisher's Sharp Null).

Let be any test statistic, let range over the support of the randomization mechanism (e.g., all ways of assigning of users to treatment under simple random sampling). Define the randomization -value

Then under , for every , with equality at multiples of .

Proof.

Under , is fixed (not random — every unit’s outcome is determined and unchanged by relabelling). The only randomness is in . Apply Theorem 3 with the group being the action of permuting ‘s entries (or, equivalently, swapping which ‘s are labelled “treatment”): the joint distribution of is invariant under group composition, so the rank of within is uniform on . The remainder of the argument is identical to the proof of Theorem 3.

The conceptual difference from §2’s permutation-test framing is what gives Theorem 12 its operational power. Validity comes from the experimenter’s coin flip, not from any assumption about the population. The data-generating process for the ‘s can be arbitrary — heteroscedastic, heavy-tailed, autocorrelated across users — and the randomization inference is still exact.

∎Remark (CUPED — Variance Reduction Without Breaking Randomization).

CUPED (Deng, Xu, Kohavi & Walker 2013, “Controlled-experiment Using Pre-Experiment Data”) replaces the metric with

where is a pre-experiment covariate per user (e.g., the user’s revenue in the prior month) and is the pooled sample mean. The adjusted variable has the same expected mean as — the term has expectation zero — but variance , strictly smaller whenever and are correlated.

Crucially, the adjustment is symmetric in the labels — the same and the same are used for both groups — so under the values remain fixed under label permutations. Theorem 12’s randomization-inference exactness carries through unchanged. Variance reduction translates directly to power gain. Typical pre-period correlations of deliver power gains equivalent to a 50% reduction in sample size — for free, from data the experimenter already had.

At log-normal Y with n = 80, σ_log = 2 and Δ = 0, run the tracker to see the Welch-t bar settle around 0.030 (under-rejecting) while the randomization bar stabilizes at ~0.05. Toggle CUPED to confirm exactness still holds (Remark 6); raise Δ to feel the power gain.

Limitations: Ties, Power, and the Covariate Problem

The rank-and-permutation toolkit is powerful, but it has three honest limitations worth flagging before we close. Ties violate the continuity assumption of §§1, 3, 4 and require a midrank correction (otherwise the test under-rejects). The discreteness of small- exact null distributions can erase the asymptotic Wilcoxon advantage at the very sample sizes where it should matter most. And the framework does not extend cleanly to covariate-adjusted inference — you cannot easily ask “is the treatment effect non-zero, controlling for age and baseline severity” within rank tests alone. Each limitation has a partial fix; the third one points outward to quantile regression and conformal prediction for the structural answer.

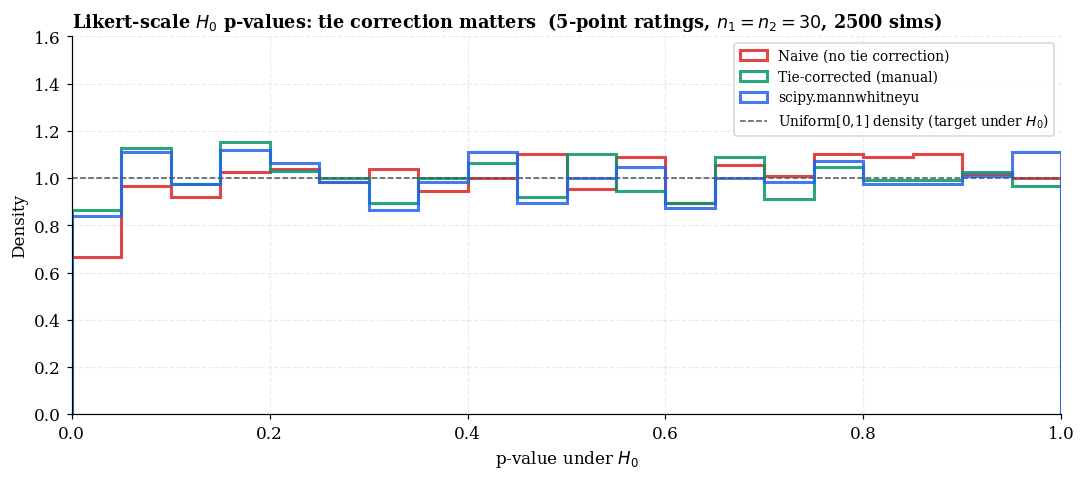

Remark (Tie Correction — Naive Variance Under-Rejects).

The clean null distributions of §1, §3, §4 assume continuous data with no ties. Real data ties — especially on Likert scales (5- or 7-point ratings), integer counts, or coarsely-binned measurements. The standard fix replaces tied values with their midrank (the average of the ranks they would have occupied) and corrects the null variance.

For Mann-Whitney with tie-group sizes ,

For Wilcoxon signed-rank with tied ‘s,

scipy.stats.mannwhitneyu and scipy.stats.wilcoxon apply these corrections automatically. Skipping them produces tests with wrong size — and the direction is worth getting right. The corrected variance is smaller than the uncorrected one (the bracketed factor is strictly less than when ties exist). Using the larger uncorrected variance in the -denominator inflates the denominator, shrinks , and inflates -values — making the uncorrected test conservative (under-rejecting), sacrificing power for no Type I error protection.

Remark (Discreteness at Very Small $n$).

The Hodges-Lehmann lower bound (Remark 3) says rank tests lose at most 14% asymptotic efficiency vs the -test even under normality, and gain unboundedly under heavy tails. But asymptotic efficiency is not the same as finite-sample dominance.

At (so 70 possible rank assignments), the achievable significance levels for an exact Mann-Whitney test are restricted to — and the smallest two-sided level above zero is . To get a level-0.05 test, we accept the next-larger achievable level , which is effectively a level-0.057 test. The CLT-based -test, by contrast, can hit any nominal level on the continuum.

This discreteness pinch shows up most exactly where rank tests should otherwise win — at very small with heavy-tailed data — and it can erase the asymptotic Wilcoxon advantage. The fix is to keep using the exact null distribution (rather than its normal approximation) but to acknowledge that the achievable values are restricted, and report the actual achieved level rather than the nominal one. scipy.stats.mannwhitneyu(method='exact') returns the exact discrete -value.

Remark (Forward Connections — Quantile Regression and Conformal Prediction).

The real limitation of rank tests is the covariate problem. Rank tests don’t extend cleanly to “test whether the treatment effect is non-zero, controlling for age, sex, and baseline severity.” The mean-rank framework lacks the regression structure that lets you partial out covariates without sacrificing exactness. Stratified rank tests like van Elteren (1960) exist but apply only to discrete strata (age bins, sex); for continuous covariates, they don’t help.

Two clean answers come from elsewhere in the T4 track:

- Quantile Regression. Replaces the squared-error loss of OLS with the asymmetric pinball loss to recover conditional quantiles given covariates. Carries the robust-to-tails ethos of Wilcoxon into a regression framework — the conditional median is the -loss minimiser, and conditional quantiles handle heavy-tailed outcomes natively.

- Conformal Prediction. Turns finite-sample exchangeability arguments — exactly the assumption underwriting permutation inference here — into prediction intervals with marginal coverage guaranteed for any black-box predictor. The exchangeability that powers Theorems 3 and 12 in this topic is the same exchangeability that powers conformal’s Theorem 1.

The shared mathematical primitive — exchangeability as a finite-sample statistical engine — ties the T4 track together. This topic deploys it for testing; quantile regression and conformal prediction deploy it for conditional estimation and prediction respectively.

Notation Reference

| Symbol | Meaning | Section |

|---|---|---|

| Paired difference | §1 | |

| Indicator of event | throughout | |

| Sign-test statistic | §1 | |

| Rank of $ | D_i | |

| Wilcoxon signed-rank statistic | §1 | |

| Finite group of null-preserving transformations | §2 | |

| Transformation of the data vector by | §2 | |

| Equality in distribution | §2 | |

| Permutation -value | §2, §7, §8 | |

| Two-sample sizes and pooled total | §3 | |

| Rank of in the pooled sample | §3 | |

| Wilcoxon rank-sum statistic | §3 | |

| Mann-Whitney statistic | §3 | |

| Population parameter estimates | §3 | |

| Mean rank in group | §4 | |

| Kruskal-Wallis statistic | §4 | |

| Limiting allocation in -sample CLT | §4 | |

| Contiguous local alternative | §5 | |

| , | Drift coefficients | §5 |

| Density-functional integral | §5, §6 | |

| Walsh average | §6 | |

| Number of Walsh averages | §6 | |

| , | One-sample / two-sample Hodges-Lehmann estimators | §6 |

| Walsh-average order statistic | §6 | |

| Lower critical value of Wilcoxon null | §6 | |

| Welch -statistic | §7 | |

| Phipson-Smyth -value | §7, §8 | |

| Potential outcomes | §8 | |

| Treatment assignment indicator | §8 | |

| Fisher’s sharp null | §8 | |

| CUPED-adjusted outcome | §8 | |

| Pooled CUPED coefficient | §8 | |

| Pre/in-period correlation | §8 | |

| Size of the -th tie group | §9 | |

| Tie-correction term | §9 |

Connections and Further Reading

This topic is the testing-and-inference foundation of formalML’s T4 (Nonparametric & Distribution-Free) track, sitting alongside conformal prediction (prediction under exchangeability) and quantile regression (covariate-adjusted location). The shared mathematical primitive — exchangeability as a finite-sample inferential engine — ties the three together; the difference is what’s being inferred (a parameter, a future observation, or a conditional quantile).

The classical foundations live in Wilcoxon (1945), Mann & Whitney (1947), Kruskal & Wallis (1952), Pitman (1937), and the Hodges-Lehmann pair (1956 for the ARE bound, 1963 for the estimator and CI). Fisher’s Design of Experiments (1935) is the source of randomization inference. Modern book-length treatments are Lehmann’s Nonparametrics (2006, revised from the 1975 Holden-Day edition) and Hájek-Šidák-Sen’s Theory of Rank Tests (1999) for the asymptotic theory in §§4–6. Van der Vaart’s Asymptotic Statistics (1998), chapters 13 (rank tests) and 14 (relative efficiency), supplies the modern measure-theoretic foundation. For the A/B-testing applications in §8, Kohavi-Tang-Xu’s Trustworthy Online Controlled Experiments (2020) is the operational guide, and Deng et al. (2013) is the original CUPED paper. See the References section above the page footer for the complete bibliography.

Connections

- Both topics rest on exchangeability as the single assumption providing finite-sample exact inference. Where this topic uses exchangeability for testing (Theorems 3, 12), conformal uses it for prediction (Theorem 1 of conformal-prediction). The shared mathematical primitive ties the T4 track together; the difference is in what's being inferred — a parameter (here) or a future observation (there). conformal-prediction

- Quantile regression is the covariate-adjusted counterpart of rank-based location inference. Where Wilcoxon and Hodges-Lehmann handle the unconditional location parameter, quantile regression handles the conditional quantile given $X$ — picking up the heavy-tail-robust ethos in a regression framework. §9 here flags the covariate problem as the structural limitation of rank tests; quantile regression is one of two clean answers to it. quantile-regression

- T4's track closer generalizes §6 here from a Hodges-Lehmann *confidence interval* for a location parameter to an HL-style *prediction interval* for the next observation. The proof of Theorem 3 of prediction-intervals cites Theorem 10 here, and Theorem 5.3 (HL/conformal asymptotic equivalence) leans on the §5 Walsh-average asymptotic theory. The §6 batch comparison there documents HL's batch-coverage shortfall on shared calibration sets — a regime distinction worth carrying back to single-sample HL applications. prediction-intervals

- T4 track closer. Depth-induced multivariate ranks are the multivariate generalization of the empirical-CDF ordering used here. Hodges-Lehmann-style multivariate rank tests built on depth-induced ranks are distribution-free under elliptical symmetry and inherit the depth consistency theorem of statistical-depth §4.1 — the multivariate version of the §3 Wilcoxon null. statistical-depth

References & Further Reading

- paper Individual comparisons by ranking methods — Wilcoxon (1945) Foundational signed-rank and rank-sum proposals (Biometrics Bulletin).

- paper On a test of whether one of two random variables is stochastically larger than the other — Mann & Whitney (1947) The U statistic and its null distribution (Annals of Mathematical Statistics).

- paper Use of ranks in one-criterion variance analysis — Kruskal & Wallis (1952) k-sample extension and the chi-squared asymptotic null (JASA).

- paper The efficiency of some nonparametric competitors of the t-test — Hodges & Lehmann (1956) Theorem 8's ARE formula and the 0.864 lower bound (Annals of Mathematical Statistics).

- paper Estimates of location based on rank tests — Hodges & Lehmann (1963) The HL estimator and exact distribution-free CI (Annals of Mathematical Statistics).

- book The Design of Experiments — Fisher (1935) The randomization-inference framework underlying §8.

- book Nonparametrics: Statistical Methods Based on Ranks — Lehmann (2006) Standard book-length reference; revised edition of the 1975 Holden-Day original. Sections on rank tests and ARE.

- book Asymptotic Statistics — van der Vaart (1998) Chapters 13 (rank tests) and 14 (relative efficiency) supply the modern measure-theoretic foundation.

- paper Permutation methods: A basis for exact inference — Ernst (2004) Concise introduction to the permutation framework (Statistical Science).

- paper Permutation P-values should never be zero: Calculating exact p-values when permutations are randomly drawn — Phipson & Smyth (2010) The (1+#extreme)/(1+B) Phipson-Smyth correction used in §§2, 7, 8.

- book Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing — Kohavi, Tang & Xu (2020) Chapters 17–18 cover randomization inference and CUPED-style variance reduction (§8).

- paper Improving the sensitivity of online controlled experiments by utilizing pre-experiment data — Deng, Xu, Kohavi & Walker (2013) Original CUPED paper underlying §8 Remark 6 (WSDM 2013).